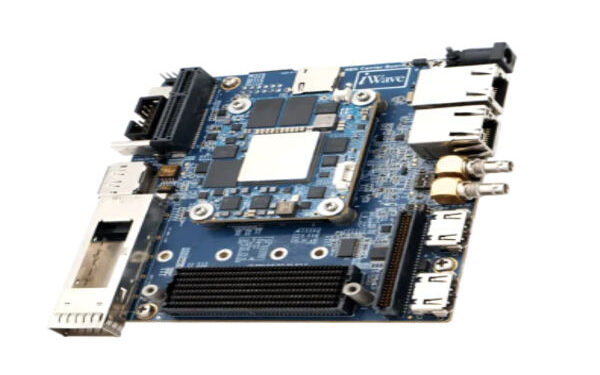

As Generative AI (GenAI) continues to transform industries, the need for powerful and efficient computing platforms is growing rapidly. The Versal AI Edge VE2302 System on Module (SoM) stands out as a robust solution designed to accelerate Large Language Models (LLMs) both at the edge and within data centers. Built on AMD’s Versal Adaptive SoC technology, the module delivers a seamless blend of high performance, energy efficiency, and AI acceleration making it a strong foundation for next-generation AI and machine learning applications.

iWave is excited to join forces with RaiderChip to push the boundaries of AI acceleration. Together, they are delivering next-generation GenAI and LLM acceleration by seamlessly blending advanced hardware and intelligent software bringing powerful AI capabilities closer to edge devices. iWave continues to lead in the design and development of Versal FPGA-based System on Modules, enabling scalable and efficient AI solutions.

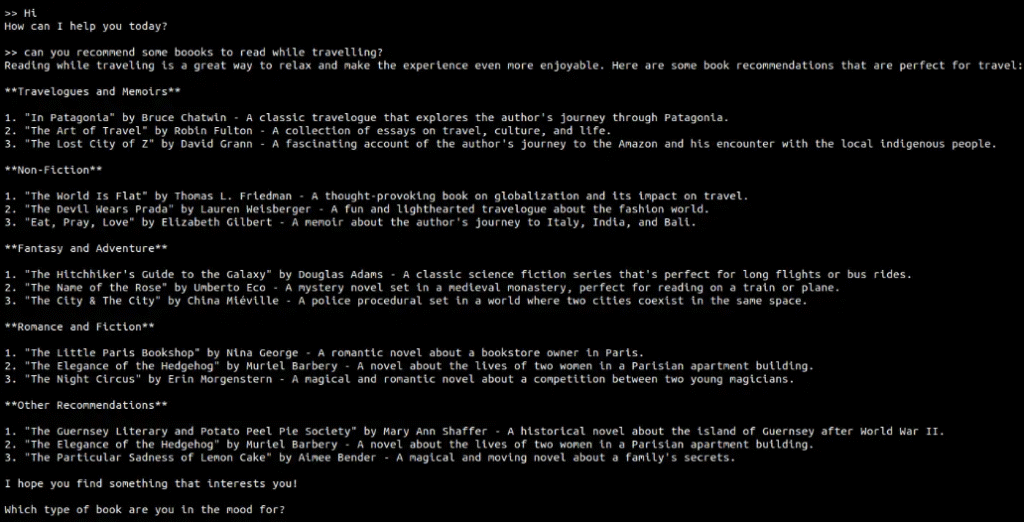

With RaiderChip’s Meta Llama 3.2 LLM running on the iW-RainboW-G57M Versal 2302 System on Module, the setup achieves an impressive throughput of around 12 tokens per second, ensuring fast and reliable AI processing. This versatility allows effortless integration into various edge systems, making it a strong fit for real-time applications such as intelligent chatbots, predictive analytics, and responsive AI assistants.

Get a firsthand look at iWave’s GenAI NPU Edge LLM demo powered by the Versal AI Edge VE2302 SoM. This high-performance chatbot operates at a speed of 12 tokens per second, enabling smooth, real-time communication. Built for simplicity and responsiveness, it manages AI-driven conversations with remarkably low latency. From customer engagement and process automation to AI experimentation, the demo highlights the impressive capabilities of on-device LLM acceleration.

GenAI NPU Edge LLM Demonstration on iWave’s Versal AI Edge VE2302 System on Module

Key Technical Specifications of the iW-RainboW-G57M Versal System on Module

- Supports VE2302 / VE2202 / VE2102 / VE2002 variants

- Up to 328K logic cells and 150K LUTs

- 8 GTYP transceivers operating at 32 Gbps

- Memory options: Up to 8GB LPDDR4, 128GB eMMC, and 256MB QSPI

- Dual 240-pin high-speed connectors for robust interfacing

- Connectivity options include PCIe Gen4, Ethernet, and USB 3.0

The Versal AI Edge-based System on Module offers compatibility with a wide range of devices, including VE2302, VE2202, VE2102, and VE2002 chips. It features 8GB of LPDDR4 RAM, 128GB of eMMC storage, and 256MB of QSPI Flash, providing a strong foundation for data-intensive edge workloads. The module also incorporates two high-speed expansion connectors and 122 user-configurable I/Os, allowing flexible integration and a variety of interface options for developers.

Designed to support diverse connectivity needs, the SoM includes 28.21 Gbps high-speed transceiver blocks capable of handling essential edge communication protocols. It further supports 40G multi-rate Ethernet, PCIe, and native MIPI interfaces crucial for vision-based and advanced AI-driven applications.

Why the Versal AI Edge is Ideal for AI Applications

- Optimized AI Engines-ML deliver high performance with ultra-low latency inference

- Native support for ML data types including INT8 and BFLOAT16 for efficient computation

- 4 MB on-chip accelerator RAM enhances memory hierarchy to boost AI performance

- AI and DSP Engines enable advanced AI, vision, radar, and LiDAR processing

- Programmable I/O allows seamless integration with diverse sensors and interfaces

- Programmable Logic supports sensor fusion and real-time data pre-processing

- Integrated Processing System provides embedded compute power and precise real-time control

Software Stack Empowering GenAI on the Versal AI Edge SoM

- Vitis AI Toolkit: Offers pre-optimized AI libraries, model compression utilities, and a complete development environment to accelerate AI model deployment on the Versal AI Edge SoM.

- ONNX Runtime & TensorFlow Lite: Enable seamless integration and deployment of AI models across the Versal platform with minimal configuration.

- PyTorch Integration: Supports deep learning and Generative AI workflows, empowering developers to accelerate Transformer-based models using high-performance FPGA execution.

Key Advantages of RaiderChip’s Edge AI Solution

- Complete Privacy and Control: Run powerful LLMs locally without depending on cloud infrastructure or exposing data to third-party monitoring.

- Offline Functionality: Operates seamlessly in remote or disconnected environments no internet or subscription required.

- Customizable Models: Supports both open-source and commercially licensed LLMs like Llama, with options for task-specific fine-tuned models.

Emerging Frontiers of Generative AI

- Intelligent Assistants: Development of versatile AI agents capable of managing complex tasks across industries from technical troubleshooting and healthcare diagnostics to education, customer engagement, and automotive systems.

- Seamless Human-Machine Interaction: Establishing a universal interface that allows users to communicate naturally with devices of all kinds from industrial equipment to everyday home appliances.

- Next-Generation Predictive Systems: Leveraging generative AI’s advanced pattern recognition to deliver highly accurate forecasts across critical domains such as defense, aviation, cybersecurity, industrial operations, and financial markets.

The iW-RainboW-G57M, a cutting-edge Versal AI Edge System on Module, is optimized with GenAI LLM acceleration to deliver exceptional AI performance with minimal latency and superior energy efficiency. It is purpose-built to support advanced workloads such as natural language processing, conversational AI, and real-time generative AI, both at the edge and within data centers. iWave equips developers with a comprehensive ecosystem of tools, software frameworks, and libraries, enabling them to fully exploit the capabilities of the Versal AI Edge platform.

With more than 25 years of expertise in the FPGA industry, iWave stands as a global innovator in designing and manufacturing FPGA-based System on Modules and offering end-to-end ODM design services. The company’s deep technical proficiency and strong design-to-deployment capabilities help transform innovative concepts into market-ready solutions that balance performance, reliability, and cost efficiency.